Every January, founders set goals for the year ahead. By March, half of those goals are already wrong. Not because the founders weren’t committed, but because the environment moved faster than the plan could absorb. A new AI model drops. A competitor restructures their pricing. A client segment shifts its expectations. The annual goal assumes the world stays roughly stable. It doesn’t, and in industries shaped by AI tools, it definitely doesn’t.

I stopped doing annual goals two years ago. Not because I gave up on planning, but because I got tired of spending six months chasing a target that was wrong by February. The 90-day sprint is a better unit. Short enough to stay relevant, long enough to actually finish something worth finishing. This post covers how I reverse engineer 90 day goals into working sprint structures that survive contact with reality.

What this post covers: A working system for how to reverse engineer 90 day goals into structured 90-day sprints built for founders and agency operators working in fast-moving, AI-affected industries. Who it’s for: creative directors, founders, and operators who’ve tried annual planning and found it breaks down too quickly. What you take away: a concrete process for building sprints that are specific, checkable, and durable enough to survive real work weeks.

Table of Contents

Why Annual Goals Break Down Fast

Annual goals fail not because the goals are bad, but because the feedback loop is too slow.

If you set a revenue target in January and realize in March that the model is broken, you spend the next nine months either chasing a wrong target or resetting from scratch. In knowledge work and creative services, the cost of that correction is enormous. Not always in money, but in time, in focus, and in the compounding delay of starting the right thing too late.

According to a 2023 McKinsey Global Institute report on organizational agility, companies running quarterly planning reviews adapt to market shifts 2.3 times faster than those using annual cycles. That research focused on enterprise teams, but the pattern holds at the founder and agency level. The faster your environment changes, the shorter your planning unit needs to be.

For founders working with AI tools, this is especially true. The arrival of a new model, a new integration, or a new competitive capability can change your positioning, your pricing, and your service design. These aren’t annual developments. They happen continuously. The 90-day sprint gives you a planning unit short enough to absorb this without abandoning structure entirely. If you want to see how sprint thinking fits into a broader approach to creative and AI work, that context is useful before going further.

What a 90-Day Sprint Actually Is

A sprint has a different structure from an annual goal, not just a compressed timeline.

An annual goal asks: where do I want to be in 12 months?

A sprint asks: what is the most important thing I can complete in 90 days, and what does done look like on day 90?

The second question is harder to answer because it forces specificity. “Grow revenue” is not a sprint. “Close 4 new B2B retainers at a minimum monthly value before day 90” is a sprint. One is a direction. The other is a destination with a clear arrival condition.

I run three sprints per year with a one-week reset gap between each. That reset week is not a holiday. It is diagnostic. What did I expect to happen versus what actually happened? What patterns do I notice across both? What am I keeping, what am I cutting?

The three-sprint structure aligns roughly with a working calendar: January through March, April through June, and July through September, with October through December serving as a consolidation and planning quarter. I don’t treat the dates as rigid boundaries, but the structure matters more than flexibility about the structure.

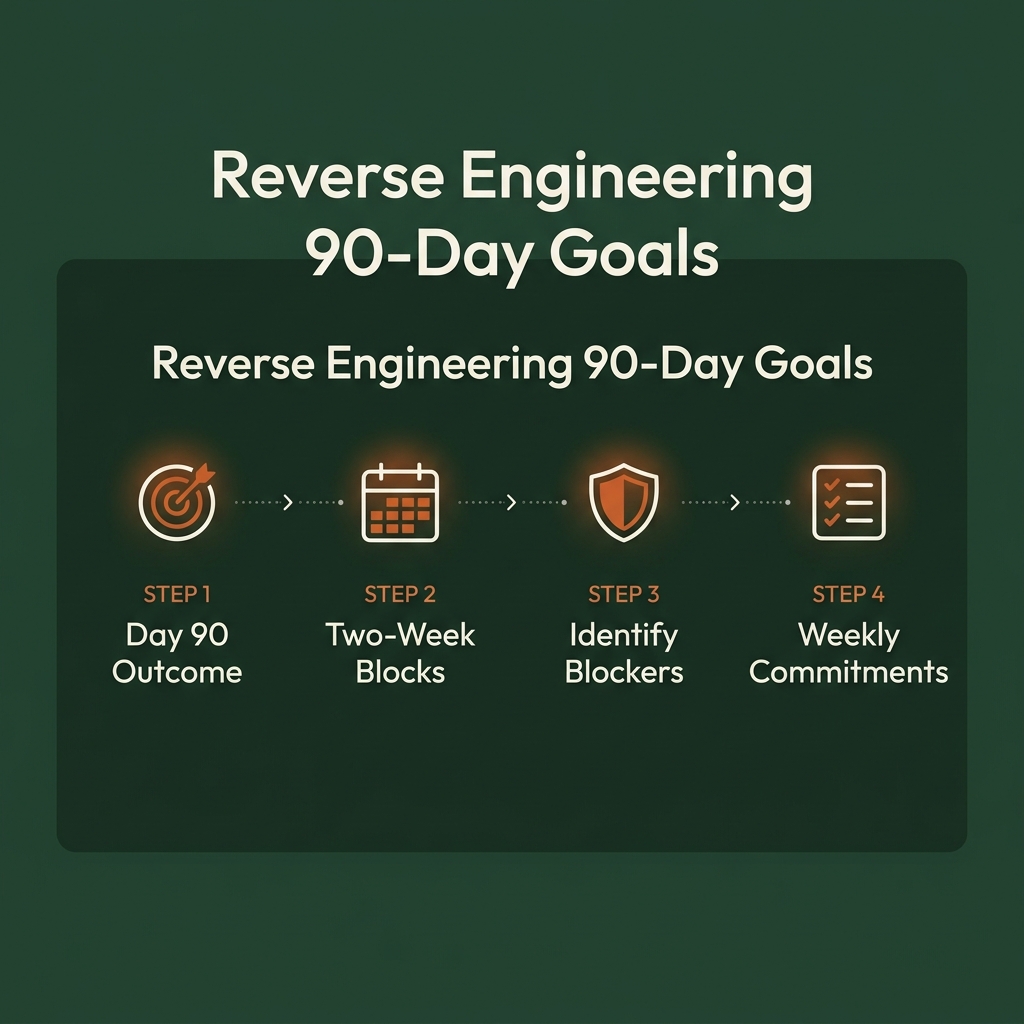

How to Reverse Engineer 90-Day Goals

Most planning starts from where you are and projects forward. Reverse engineering starts from day 90 and works backward. This changes what you include, what you drop, and whether the math actually holds before you commit to it.

Step 1: Name the single outcome at day 90.

Not three outcomes. One. The constraint is deliberate. If you cannot name one outcome without adding “and also,” you don’t have a sprint yet. You have ambitions.

For example: “By day 90, I have 12 long-form posts published on byharshal.com, each targeting a primary keyword that is currently outside the top 30 on Google Search Console.” That is a sprint. It is complete, specific, and checkable. You can read about how this kind of structured content goal fits into a larger blog and content framework for context on why the specificity matters.

Step 2: Work backwards in two-week blocks.

90 days divided into six blocks of 14 days each. Assign a checkpoint to each block. A checkpoint is something you can check: a deliverable, not a behavior. “Work on content” is not a checkpoint. “Publish posts 1-2 and complete keyword research for posts 3-6” is a checkpoint.

This is where the reverse engineering test happens. If the math breaks at this step, which it often does, you adjust the day-90 outcome rather than pretending the blocks will somehow work out. Better to reset the destination now than to discover the math is broken at month two.

Step 3: Identify blockers before they appear.

For each two-week block, write down what could stop you from hitting the checkpoint. Then write down what you will do when that happens. Not if. When.

This is the step most people skip. They skip it because thinking about failure feels counterproductive. But a plan with no failure response is just an optimistic spreadsheet. The founders who consistently finish sprints are the ones who have pre-decided their responses to the most common blockers. A client emergency in week two is not a surprise. How you respond to it should not require a new decision.

Step 4: Set weekly commitments, not daily tasks.

Daily task lists don’t survive real work. A meeting overruns. A client fires back. A deliverable takes twice as long. The whole day breaks down, and the task list becomes evidence of failure rather than a guide.

Weekly commitments are more durable. At the start of each week, you commit to one to three things that, if done, move the sprint forward. These are protected. Other work happens around them. According to researcher Cal Newport’s work in Deep Work (2016), the most consistent performers in knowledge-intensive roles structure their output around commitments rather than tasks, because commitments survive disruption in ways that daily task lists do not. In agency work specifically, this has held for me across project types and client intensities.

The Two-Week Block System

The two-week block is the structural unit of the sprint. Each block ends with a brief review: did I hit the checkpoint? If yes, proceed. If no, what shifted?

The review should take fifteen minutes, not two hours. Three questions:

- Did I hit the checkpoint? Yes or no.

- If no: was the checkpoint wrong, or was my execution wrong?

- What do I carry into the next block?

If you missed the checkpoint because the checkpoint was wrong, adjust the sprint. If you missed it because the work didn’t happen, that is a different diagnosis.

I track block reviews in a running Notion document. The record of prior sprints is genuinely useful. Six months of block reviews shows patterns a single retrospective cannot: which kinds of work consistently slip, which blockers repeat, and whether your capacity estimates have improved over time. If you use Notion as your planning base, the AI Orchestra Workflow resource shows how I connect planning, review, and execution in one system.

The Weekly Operating Rhythm

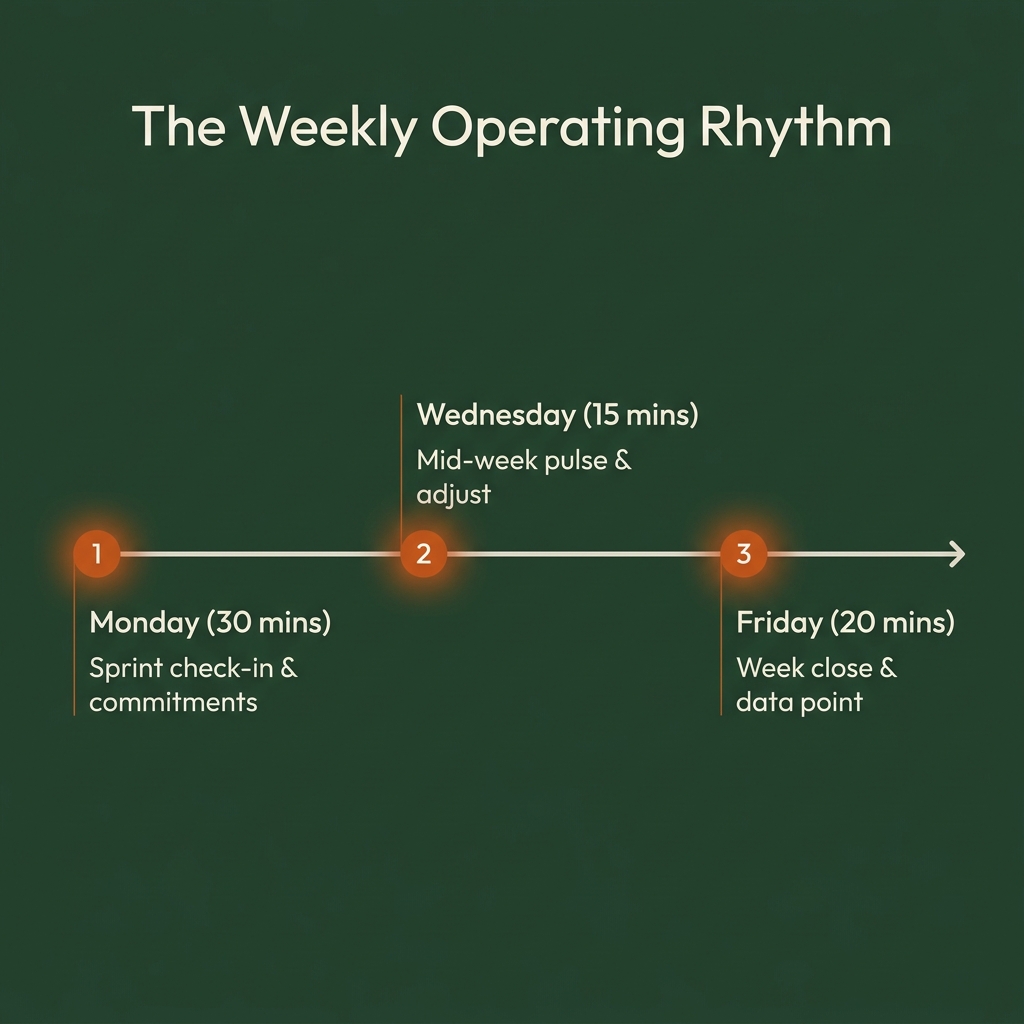

The sprint needs a weekly system to stay alive. Here is mine, taking roughly 65 minutes across the week:

Monday (30 minutes): Sprint check-in. Where am I against the current two-week block? What are this week’s commitments? Write them down by hand. Not in a tool. Writing by hand makes the commitment feel different from typing it into a field.

Wednesday (15 minutes): Mid-week pulse. Am I on track for this week’s commitments? If not, what adjusts? Sometimes the answer is that a commitment shifts to later in the week. Sometimes it is that Thursday and Friday need to be fully protected.

Friday (20 minutes): Week close. What got done, what didn’t, what I’m carrying forward. Write one sentence per item. This is not a journal entry. It is a data point that Monday’s planning depends on.

The discipline is not to miss these, particularly the Friday close. When you skip it, you arrive at Monday without context and spend your planning time reconstructing what happened instead of deciding what matters this week.

Using AI Inside Your Sprint Review

AI is useful in the sprint review, not in the sprint itself.

I keep a running sprint document in Notion. Every Monday, I paste the previous Friday’s close note into a Claude conversation and ask it to compare my stated commitments to my reported outputs. I ask it to flag recurring patterns, particularly anything that looks structural rather than situational.

This takes about five minutes. The output is occasionally humbling. It surfaces patterns I have been too close to see, especially when I’ve had a string of high-output weeks where self-monitoring gets harder. It is not a substitute for judgment, but it extends my ability to catch drift before it compounds.

I also use Claude to run the initial reverse-engineering math. If I describe the sprint outcome and my available capacity, it breaks it into block checkpoints and flags overloads before I commit to them. The output isn’t always calibrated, since it doesn’t know my actual constraints and history. But it is faster than doing the math from scratch and often catches a structural error I would have missed. The full workflow for how AI fits into planning and execution is covered in the AI Orchestra Workflow documentation.

The rule is consistent: AI reviews the sprint. I make the decisions about the sprint.

When the Environment Shifts Mid-Sprint

This is the specific challenge for founders operating in AI-affected industries, and the most common reason sprints fall apart quietly rather than failing cleanly.

The problem is not that things change. Things always change. The problem is that AI tools create step-change disruptions, not gradual ones. A new model can change what your core service costs to produce, which changes your margin structure, which may break your hiring plan and your sprint in the same week.

When this happens, the question is not “do I abandon the sprint?” The right question is: has the outcome at day 90 become wrong, or has the path to it changed?

If the outcome is still correct but the path changed, adjust the block checkpoints and continue. The destination is stable. You are rerouting.

If the outcome itself is wrong, stop, document why, and run a one-day reset. Write a new day-90 outcome, rebuild the blocks from that new endpoint, and restart the sprint cleanly. Don’t let the old sprint die by neglect while you informally pursue a new one. That produces neither the old result nor the new one.

The founders I see fail at sprint planning are usually not lazy or undisciplined. They are running two sprints at once. An official one they are not actually working on, and an informal one they started when the environment shifted. Naming the shift, closing the old sprint intentionally, and starting a new one is the only way out of that pattern. The same principle applies in client and creative work, which I’ve written about in the context of how I structure AI-conducted workflows.

Key Takeaways

- Annual goals have feedback loops too slow for AI-affected industries. 90-day sprints are a better planning unit for high-velocity environments.

- A sprint starts with one specific outcome at day 90. Not a list. One destination with a clear arrival condition.

- Reverse engineer from day 90 back to today using six two-week blocks, each with a concrete, checkable checkpoint.

- Identify blockers and your response to them before the sprint begins. Pre-deciding is faster than deciding under pressure.

- Weekly commitments survive real work weeks better than daily task lists. Set one to three per week, protect them, build other work around them.

- AI is useful for sprint reviews and reverse-engineering math. It is not useful for making the decisions that belong to you.

- When the environment shifts mid-sprint, distinguish between a changed path (adjust the block checkpoints) and a wrong destination (reset cleanly with a new sprint).

Frequently Asked Questions

What if my sprint goal becomes irrelevant mid-sprint?

Stop and diagnose before abandoning it. Ask whether the goal is actually wrong or whether you're behind and looking for an exit. If the environment genuinely shifted the destination, reset cleanly with a new sprint document. If the path changed but the destination is still correct, adjust the block checkpoints and continue. The key is to close the old sprint intentionally, not let it die quietly while you start a new one informally.

How many sprint goals should I run at once?

One primary. You can have supporting goals, but there should be one thing that, if you only did this and nothing else, you'd call the sprint successful. Multiple equal-priority goals tend to produce multiple half-finished results, not multiple completed ones.

Should I use this system for personal goals, not just work?

Yes, with the same rules. One outcome, reverse-engineered blocks, weekly commitments. I run separate personal sprints rather than combined ones. Mixing professional and personal goals in one sprint creates prioritization conflicts when they compete, which they always do at the worst possible time.

How do I handle client work that expands into sprint time?

This is a capacity problem, not a sprint design problem. If client work consistently displaces your sprint commitments, you are over-committed. The sprint makes this visible rather than hiding it. The fix is to either reduce client load or accept a longer timeline for the sprint outcome. The sprint won't protect you from over-commitment, but it will make it harder to ignore.

What tools do I need to run this system?

Notion for the sprint document and block review log, Claude for Monday reviews, and a physical notebook for weekly planning. Nothing more. Adding more tools is adding friction between you and the actual work.

Harshal Saraf is a Creative Director and AI Workflow Consultant based in Indore, India. Under his practice ByHarshal, he sets up AI workflows for founders, agencies, and brands across India. Where Creative Direction Meets AI Orchestration. He has led creative direction for brands and small and medium scale B2B businesses, and currently works as Creative Director and AI Workflow Strategist at Square Root SEO. He writes Oh So AI, a Tuesday and Friday newsletter on AI tools, workflows, and productivity for founders and creatives.